Pseudonymisation Podcast

Timely discussions with industry experts about the requirements for and the benefits of Pseudonymisation

Episode No. 2

Top three takeaways from this episode of the Pseudonymisation Webinar

View Transcript

Pseudonymisation Podcast

Episode No. 2: Pseudonymisation for Fourth Industrial Revolution (4IR) Data

Gary LaFever

This is Gary LaFever, CEO of Anonos, and it's my pleasure to welcome you today to the second episode of the Pseudonymisation Podcast. We have with us today Anne Flanagan, Data Policy and Governance Lead for the Centre for the Fourth Industrial Revolution at the World Economic Forum, together with Andy Splittgerber, Technology and Privacy Lawyer with Reed Smith’s Munich office. I have been very fortunate to work with Anne and others at the World Economic Forum on a number of projects - the Data for Common Purpose Initiative, Data Intermediaries Projects, as well as Smart Cities Projects. Anne, could you please touch upon the need and importance of accurate data that is reflective and has high fidelity for projects, such as those that the World Economic Forum is engaged in as a backdrop for our discussion today on Statutory Pseudonymisation?

Anne Flanagan 00:58

Absolutely. Thank you, Gary. A pleasure to be here today. Thank you for having me on the podcast and indeed great to work with you through the forum. Thanks for mentioning some of our projects there. I'm based in our Centre for the Fourth Industrial Revolution, so we really look at this new digital revolution that we've all been coming through over the past few years where our physical and online world start to merge, and what that means is that we are online all of the time in terms of how we do business on a day-to-day basis, how we communicate with each other. I'm in San Francisco right now and Andy’s in Munich, for example. And you really start to see not just a desire but a need by businesses and by people to be online and the vast quantities of data, the scale and the ubiquity of the collection, processing, and movement of data worldwide is absolutely unprecedented and will only continue to grow at an exponential rate. The reality is that a lot of the policies that we have - and when I talk about “we,” I mean worldwide we as humanity have - are really based upon norms of the 1990s or what you might call the Third Industrial Revolution, where we had the Internet, but it was a very explicit choice as to whether we went online or whether we didn't go online. I think you could argue at this point in time that we're pretty much always online. And even if you're in and you mentioned Smart Cities there, for example, maybe there is a sensor or maybe there's a camera that's tracking your every movement as you go about your day to day life. You and I would say that we're not online when that happens, but we are and maybe there is a good reason for that. Maybe the government is trying to manage traffic. Maybe we're trying to measure fuel emissions. Maybe it's a case of ensuring that there is adequate schooling depending on the movement of a lot of people in an area. Use cases are absolutely infinite, but I think the core difference between what's happening now and what happened previously is really that the reliance upon data is very, very different. What does that mean? That means that the fidelity and provenance of data is crucial. Because the data that's going into what we would call the data value chain and that's the idea that once you start using data, it can be used, reused, transferred, etc., effectively ad infinitum. If that data is not properly collected or not lawfully collected, not only is that harmful and problematic for people, it's a huge risk to business. And secondly, when we look at the innovations of the Fourth Industrial Revolution, they are what we might term data hungry. They rely upon data that you process, potentially machine learning and the use of AI. And if you're going to develop a product or develop a solution that's based upon learning and getting insights from data, if your data is rubbish - you know, the famous phrase, “Garbage in, garbage out.” Industry needs access to verifiable, traceable data that has proper provenance and that is also important for the protection of people.

Gary LaFever 03:57

Well said, Anne. And one of the things you touched upon there was the Fourth Industrial Revolution and how it's different from what preceded it because different data flows are now intermingled. When are we online and when are we not? And so, it really highlights the need for protections that flow with the data and protect the data, regardless of where and how it's being used, and we use the term during this call of Statutory Pseudonymisation. What we mean by Statutory Pseudonymisation is Pseudonymisation as defined in statutes. Now, the first statute to define it was in fact the EU GDPR. The UK now, with its own UK GDPR, as well has that. As does South Korea, Japan, and four US States - California, Virginia, Colorado, and Utah. Andy, would you please just touch upon for some of the lay people in the audience, what does it mean and require for you to deliver a statutorily defined term and how it's different from how that might be used in conversation?

Andreas Splittgerber 04:55

Yeah, of course. Thanks Gary and thanks Anne for doing this today and I'm very happy to be a part of the session today. The term Pseudonymisation and then as well as anonymisation are a great example I always hear when I give talks. And then, there is the question or the statement: “Hey, we're anonymizing our data. We're removing the name and we are substituting it by Mickey Mouse. And then, this is all perfect. So, we’re all good, aren’t we?” And then, this is like the lay view. And then, you come to say: “Well, under the law, this is not enough. You need to meet the requirements under the GDPR, which amongst others says you need to have technical and organisational measures in place to handle the pseudonymised aspects and this is just by removing Mickey Mouse and the name, not the case.” And so, this example wouldn’t meet those requirements. It’s definitely not anonymisation and likely or definitely not Pseudonymisation. And what it means then, in terms of the lawyer, is that we can profit off the advantages or the consequences that are attached to Pseudonymisation under the law. So, only if you meet Pseudonymisation as defined in Article 4 of GDPR, then you can also make profit of the consequences of Pseudonymisation as I mentioned before, justification of a certain data processing, meeting certain IT security requirements, meeting principles of data correctness and data minimization, and there are other areas in the GDPR where this really helps and also on the data subject rights. So, that’s the difference.

Gary LaFever 06:48

Well said. And it's interesting, the complexity of modern data flows and the fact that the Fourth Industrial Revolution is indicative of these data flows intermingling. It also highlights the fact that there are silos between different perspectives, and the definition, meaning, intent, benefit, and burden of Statutory Pseudonymisation almost requires you to have a part lawyer perspective and a part data scientist perspective because in order to be able to stand up to the test of Statutory Pseudonymisation, that it is not possible to re-attribute the data to the individuals but for access to data that's held individually by the data controller or designee is a very high standard which a lawyer may not, in and of themselves, appreciate. Anne, if you could just touch upon how these barriers and silos that are breaking down are not just between different vertical industries, but also different expertise as well and how in order to future proof the concepts that we are talking about, there has to be flexibility on behalf of everybody and an openness to the sophistication of the issues that we are dealing with.

Anne Flanagan 07:55

Absolutely, Gary. Yeah, it was basically a founding principle of the World Economic Forum Centre for the Fourth Industrial Revolution that these issues are unprecedented issues around data privacy, innovation, and the Fourth Industrial Revolution. And because they are unprecedented and they do break down the silos that you mentioned, that it requires a multi-stakeholder community to develop the answers. That is what we do. We work with governments. We work with businesses, academics, and civil society actors. And we provide a space for them to design potential solutions to these problems. It's really, really challenging and the reason it's so challenging is, if you think about it from a couple of different perspectives, the first one is that, as we mentioned already, the use of data is now so ubiquitous that it's affecting potentially everybody on earth and every business on earth, so the number of different business models that are affected are just enormous. Secondly, the different perspectives on how data should be treated and handled. We are getting into a space that's really, really sophisticated and it's great to see a solution like Pseudonymisation, which is a very sophisticated solution, and we necessarily need those types of sophisticated solutions. But if you are looking at what you can see there under the GDPR, which is the combination of legal expertise and data science expertise, a few people have that. It's becoming more and more its own field and its own right. That's just one example. And to really be able to tackle these problems and to try to come up with solutions, chances are no single person has the answer. Chances are, you need collaboration. That's true when it comes to designing policy. It's true when it comes to designing solutions. It's also true in the C-Suite where you need different perspectives to try to understand what the immediate challenges are. What is really encouraging to see is that if you want to talk specifically about the GDPR, we do see an open recognition of the role of data science, of the role of privacy engineering in improving this space, and a recognition that it's not just a legal problem anymore. That's largely really positive because it's actually quite dangerous to frame it as just a legal problem. It means that companies can disassociate themselves from responsibility and just, you know, “leave it up to the lawyers to solve.” That's not the best way to innovate. The best way to innovate is obviously to be responsible and to right the company. The same thing is true for people. It means that it is relevant for everybody and relevant for human technology interaction at scale. So, in short, it is important to have different perspectives because the implications are so varying and so nuanced.

Gary LaFever 10:40

With that, Andy, I know you and I have talked in the past about the need for accurate data and you've mentioned some use cases related to AI. Do you want to mention those and just kind of touch upon the benefits that Pseudonymisation might bring in those circumstances?

Andreas Splittgerber 10:54

Yeah, so we are working with a lot of clients on AI projects, be it for example in the area of driving self-driving cars, in the health area, development of certain health products, and we also assisted in Europe on the Corona App that also contained a big portion of Pseudonymisation probably a bit too much, probably too much data minimisation. It could have been more successful with a bit more data. So, these are the concepts where you really need a lot of data and often it's difficult to be permitted to process this data and other GDPR concepts because you either need consent. And often, you don't know if you even get consent or it's difficult to anticipate the purposes you will need or you will process the data later on. Then, you go into the area of: How do we get the data we have into like a data lake, you know, for the big data collection to then do the AI or machine learning? And there, the justification for example under GDPR Article 6(4) is the change of purpose so you're changing the purpose because originally you were helping the car to navigate through a city. That was the purpose, and the changed purpose is now developing a nice AI solution or research so that's a change. So, you need to check: “Am I permitted to do this under GDPR?” And here, Pseudonymisation is mentioned or the real GDPR Pseudonymisation is mentioned as one of the areas where this can be permitted, and also the change in the balancing of interest justification under Article 6(1)(f) also that is also accepted that Pseudonymisation may find the right balancing of interest to then permit such data processing. Why is this being done? And for example, why are we not just anonymizing, removing all the data? That’s often not very helpful in these types of projects because you want to update certain datasets with data that develops afterwards, so you need to find the right persons in the data lake to add the updated information. So, in this area, Pseudonymisation as defined under the GDPR is one of the means that we see as a solution very often.

Gary LaFever 13:26

Thank you, Andy. So, Andy, what’s interesting in your description is you even used the balancing of interest test, which obviously is very relevant for Article 6(1)(f), but there's a balancing here almost like a child's teeter-totter, a toy, where on the one end you mentioned that the Corona App perhaps had too much data minimisation and therefore, there wasn’t enough identifiability of data. So, that is one area where you are trying to balance. And the other end, when those situations warrant it and it’s justified and authorised, you actually do want if permissible and appropriate to link back to individuals. An appropriate balancing of interest is necessary between processing too little and processing too much personal data. While data models require sufficient and accurate data so they are representative of real-world conditions and this is necessary to produce accurate and representative results that we can all live with, perhaps for decades to come. Yet, we need technical controls to ensure that the data is processed in a manner that respects the fundamental personal rights and privacy of individuals. As highlighted by Anne in today's Fourth Industrial Revolution, data is increasingly processed in multiple decentralised and intermingled ways with data coming from numerous sources and silos. Yet, traditional approaches to protecting data, as noted by Andy oftentimes going under the general term anonymisation, are literally predicated on limiting who has access to the data and limiting where the data flows. Well, this is just inconsistent with the reality of the Fourth Industrial Revolution, which is all about the free flow of information. This is oftentimes referred to as decentralised processing, and what that means is you can't always control or centralise where the processing occurs. This is why new approaches to protecting data, what we call the Fourth Industrial Revolution or 4IR technology protections are required. So, some of the types of protections that are oftentimes mentioned as 4IR technologies are blockchain, NFTs, and Statutory Pseudonymisation. Our conversation today is focused on the latter, Statutory Pseudonymisation, because it enables the controls to flow with the data wherever it goes and wherever it is used. Statutory Pseudonymisation refers to what was initially defined within the EU GDPR as Article (4)(5) Pseudonymisation. It has since been embraced by other countries. Obviously, the UK after Brexit has its own UK GDPR. But also, South Korea and Japan privacy laws include similar definitions of Pseudonymisation, as do four US States - California, Virginia, Colorado, and Utah. In this podcast, we looked at and discussed a number of ways in which this term, Pseudonymisation, as specifically enumerated and defined in the statutes can open up new vistas of data innovation and create value for society, companies and individuals alike by helping to reconcile the tensions between protecting data and monetising or otherwise maximising the utility of the data. Thank you very much, Anne and Andy, for joining us today. We are certain that everyone in the audience appreciated and benefited from your insights and perspective and we hoped that everyone will check out the initial episode of the Pseudonymisation Podcast, which highlights how very different the requirements for and the benefits of Statutory or GDPR Pseudonymisation are from what most people think. Thank you again for joining us and have a great day.

Episode No. 2: Pseudonymisation for Fourth Industrial Revolution (4IR) Data

Gary LaFever

This is Gary LaFever, CEO of Anonos, and it's my pleasure to welcome you today to the second episode of the Pseudonymisation Podcast. We have with us today Anne Flanagan, Data Policy and Governance Lead for the Centre for the Fourth Industrial Revolution at the World Economic Forum, together with Andy Splittgerber, Technology and Privacy Lawyer with Reed Smith’s Munich office. I have been very fortunate to work with Anne and others at the World Economic Forum on a number of projects - the Data for Common Purpose Initiative, Data Intermediaries Projects, as well as Smart Cities Projects. Anne, could you please touch upon the need and importance of accurate data that is reflective and has high fidelity for projects, such as those that the World Economic Forum is engaged in as a backdrop for our discussion today on Statutory Pseudonymisation?

Anne Flanagan 00:58

Absolutely. Thank you, Gary. A pleasure to be here today. Thank you for having me on the podcast and indeed great to work with you through the forum. Thanks for mentioning some of our projects there. I'm based in our Centre for the Fourth Industrial Revolution, so we really look at this new digital revolution that we've all been coming through over the past few years where our physical and online world start to merge, and what that means is that we are online all of the time in terms of how we do business on a day-to-day basis, how we communicate with each other. I'm in San Francisco right now and Andy’s in Munich, for example. And you really start to see not just a desire but a need by businesses and by people to be online and the vast quantities of data, the scale and the ubiquity of the collection, processing, and movement of data worldwide is absolutely unprecedented and will only continue to grow at an exponential rate. The reality is that a lot of the policies that we have - and when I talk about “we,” I mean worldwide we as humanity have - are really based upon norms of the 1990s or what you might call the Third Industrial Revolution, where we had the Internet, but it was a very explicit choice as to whether we went online or whether we didn't go online. I think you could argue at this point in time that we're pretty much always online. And even if you're in and you mentioned Smart Cities there, for example, maybe there is a sensor or maybe there's a camera that's tracking your every movement as you go about your day to day life. You and I would say that we're not online when that happens, but we are and maybe there is a good reason for that. Maybe the government is trying to manage traffic. Maybe we're trying to measure fuel emissions. Maybe it's a case of ensuring that there is adequate schooling depending on the movement of a lot of people in an area. Use cases are absolutely infinite, but I think the core difference between what's happening now and what happened previously is really that the reliance upon data is very, very different. What does that mean? That means that the fidelity and provenance of data is crucial. Because the data that's going into what we would call the data value chain and that's the idea that once you start using data, it can be used, reused, transferred, etc., effectively ad infinitum. If that data is not properly collected or not lawfully collected, not only is that harmful and problematic for people, it's a huge risk to business. And secondly, when we look at the innovations of the Fourth Industrial Revolution, they are what we might term data hungry. They rely upon data that you process, potentially machine learning and the use of AI. And if you're going to develop a product or develop a solution that's based upon learning and getting insights from data, if your data is rubbish - you know, the famous phrase, “Garbage in, garbage out.” Industry needs access to verifiable, traceable data that has proper provenance and that is also important for the protection of people.

Gary LaFever 03:57

Well said, Anne. And one of the things you touched upon there was the Fourth Industrial Revolution and how it's different from what preceded it because different data flows are now intermingled. When are we online and when are we not? And so, it really highlights the need for protections that flow with the data and protect the data, regardless of where and how it's being used, and we use the term during this call of Statutory Pseudonymisation. What we mean by Statutory Pseudonymisation is Pseudonymisation as defined in statutes. Now, the first statute to define it was in fact the EU GDPR. The UK now, with its own UK GDPR, as well has that. As does South Korea, Japan, and four US States - California, Virginia, Colorado, and Utah. Andy, would you please just touch upon for some of the lay people in the audience, what does it mean and require for you to deliver a statutorily defined term and how it's different from how that might be used in conversation?

Andreas Splittgerber 04:55

Yeah, of course. Thanks Gary and thanks Anne for doing this today and I'm very happy to be a part of the session today. The term Pseudonymisation and then as well as anonymisation are a great example I always hear when I give talks. And then, there is the question or the statement: “Hey, we're anonymizing our data. We're removing the name and we are substituting it by Mickey Mouse. And then, this is all perfect. So, we’re all good, aren’t we?” And then, this is like the lay view. And then, you come to say: “Well, under the law, this is not enough. You need to meet the requirements under the GDPR, which amongst others says you need to have technical and organisational measures in place to handle the pseudonymised aspects and this is just by removing Mickey Mouse and the name, not the case.” And so, this example wouldn’t meet those requirements. It’s definitely not anonymisation and likely or definitely not Pseudonymisation. And what it means then, in terms of the lawyer, is that we can profit off the advantages or the consequences that are attached to Pseudonymisation under the law. So, only if you meet Pseudonymisation as defined in Article 4 of GDPR, then you can also make profit of the consequences of Pseudonymisation as I mentioned before, justification of a certain data processing, meeting certain IT security requirements, meeting principles of data correctness and data minimization, and there are other areas in the GDPR where this really helps and also on the data subject rights. So, that’s the difference.

Gary LaFever 06:48

Well said. And it's interesting, the complexity of modern data flows and the fact that the Fourth Industrial Revolution is indicative of these data flows intermingling. It also highlights the fact that there are silos between different perspectives, and the definition, meaning, intent, benefit, and burden of Statutory Pseudonymisation almost requires you to have a part lawyer perspective and a part data scientist perspective because in order to be able to stand up to the test of Statutory Pseudonymisation, that it is not possible to re-attribute the data to the individuals but for access to data that's held individually by the data controller or designee is a very high standard which a lawyer may not, in and of themselves, appreciate. Anne, if you could just touch upon how these barriers and silos that are breaking down are not just between different vertical industries, but also different expertise as well and how in order to future proof the concepts that we are talking about, there has to be flexibility on behalf of everybody and an openness to the sophistication of the issues that we are dealing with.

Anne Flanagan 07:55

Absolutely, Gary. Yeah, it was basically a founding principle of the World Economic Forum Centre for the Fourth Industrial Revolution that these issues are unprecedented issues around data privacy, innovation, and the Fourth Industrial Revolution. And because they are unprecedented and they do break down the silos that you mentioned, that it requires a multi-stakeholder community to develop the answers. That is what we do. We work with governments. We work with businesses, academics, and civil society actors. And we provide a space for them to design potential solutions to these problems. It's really, really challenging and the reason it's so challenging is, if you think about it from a couple of different perspectives, the first one is that, as we mentioned already, the use of data is now so ubiquitous that it's affecting potentially everybody on earth and every business on earth, so the number of different business models that are affected are just enormous. Secondly, the different perspectives on how data should be treated and handled. We are getting into a space that's really, really sophisticated and it's great to see a solution like Pseudonymisation, which is a very sophisticated solution, and we necessarily need those types of sophisticated solutions. But if you are looking at what you can see there under the GDPR, which is the combination of legal expertise and data science expertise, a few people have that. It's becoming more and more its own field and its own right. That's just one example. And to really be able to tackle these problems and to try to come up with solutions, chances are no single person has the answer. Chances are, you need collaboration. That's true when it comes to designing policy. It's true when it comes to designing solutions. It's also true in the C-Suite where you need different perspectives to try to understand what the immediate challenges are. What is really encouraging to see is that if you want to talk specifically about the GDPR, we do see an open recognition of the role of data science, of the role of privacy engineering in improving this space, and a recognition that it's not just a legal problem anymore. That's largely really positive because it's actually quite dangerous to frame it as just a legal problem. It means that companies can disassociate themselves from responsibility and just, you know, “leave it up to the lawyers to solve.” That's not the best way to innovate. The best way to innovate is obviously to be responsible and to right the company. The same thing is true for people. It means that it is relevant for everybody and relevant for human technology interaction at scale. So, in short, it is important to have different perspectives because the implications are so varying and so nuanced.

Gary LaFever 10:40

With that, Andy, I know you and I have talked in the past about the need for accurate data and you've mentioned some use cases related to AI. Do you want to mention those and just kind of touch upon the benefits that Pseudonymisation might bring in those circumstances?

Andreas Splittgerber 10:54

Yeah, so we are working with a lot of clients on AI projects, be it for example in the area of driving self-driving cars, in the health area, development of certain health products, and we also assisted in Europe on the Corona App that also contained a big portion of Pseudonymisation probably a bit too much, probably too much data minimisation. It could have been more successful with a bit more data. So, these are the concepts where you really need a lot of data and often it's difficult to be permitted to process this data and other GDPR concepts because you either need consent. And often, you don't know if you even get consent or it's difficult to anticipate the purposes you will need or you will process the data later on. Then, you go into the area of: How do we get the data we have into like a data lake, you know, for the big data collection to then do the AI or machine learning? And there, the justification for example under GDPR Article 6(4) is the change of purpose so you're changing the purpose because originally you were helping the car to navigate through a city. That was the purpose, and the changed purpose is now developing a nice AI solution or research so that's a change. So, you need to check: “Am I permitted to do this under GDPR?” And here, Pseudonymisation is mentioned or the real GDPR Pseudonymisation is mentioned as one of the areas where this can be permitted, and also the change in the balancing of interest justification under Article 6(1)(f) also that is also accepted that Pseudonymisation may find the right balancing of interest to then permit such data processing. Why is this being done? And for example, why are we not just anonymizing, removing all the data? That’s often not very helpful in these types of projects because you want to update certain datasets with data that develops afterwards, so you need to find the right persons in the data lake to add the updated information. So, in this area, Pseudonymisation as defined under the GDPR is one of the means that we see as a solution very often.

Gary LaFever 13:26

Thank you, Andy. So, Andy, what’s interesting in your description is you even used the balancing of interest test, which obviously is very relevant for Article 6(1)(f), but there's a balancing here almost like a child's teeter-totter, a toy, where on the one end you mentioned that the Corona App perhaps had too much data minimisation and therefore, there wasn’t enough identifiability of data. So, that is one area where you are trying to balance. And the other end, when those situations warrant it and it’s justified and authorised, you actually do want if permissible and appropriate to link back to individuals. An appropriate balancing of interest is necessary between processing too little and processing too much personal data. While data models require sufficient and accurate data so they are representative of real-world conditions and this is necessary to produce accurate and representative results that we can all live with, perhaps for decades to come. Yet, we need technical controls to ensure that the data is processed in a manner that respects the fundamental personal rights and privacy of individuals. As highlighted by Anne in today's Fourth Industrial Revolution, data is increasingly processed in multiple decentralised and intermingled ways with data coming from numerous sources and silos. Yet, traditional approaches to protecting data, as noted by Andy oftentimes going under the general term anonymisation, are literally predicated on limiting who has access to the data and limiting where the data flows. Well, this is just inconsistent with the reality of the Fourth Industrial Revolution, which is all about the free flow of information. This is oftentimes referred to as decentralised processing, and what that means is you can't always control or centralise where the processing occurs. This is why new approaches to protecting data, what we call the Fourth Industrial Revolution or 4IR technology protections are required. So, some of the types of protections that are oftentimes mentioned as 4IR technologies are blockchain, NFTs, and Statutory Pseudonymisation. Our conversation today is focused on the latter, Statutory Pseudonymisation, because it enables the controls to flow with the data wherever it goes and wherever it is used. Statutory Pseudonymisation refers to what was initially defined within the EU GDPR as Article (4)(5) Pseudonymisation. It has since been embraced by other countries. Obviously, the UK after Brexit has its own UK GDPR. But also, South Korea and Japan privacy laws include similar definitions of Pseudonymisation, as do four US States - California, Virginia, Colorado, and Utah. In this podcast, we looked at and discussed a number of ways in which this term, Pseudonymisation, as specifically enumerated and defined in the statutes can open up new vistas of data innovation and create value for society, companies and individuals alike by helping to reconcile the tensions between protecting data and monetising or otherwise maximising the utility of the data. Thank you very much, Anne and Andy, for joining us today. We are certain that everyone in the audience appreciated and benefited from your insights and perspective and we hoped that everyone will check out the initial episode of the Pseudonymisation Podcast, which highlights how very different the requirements for and the benefits of Statutory or GDPR Pseudonymisation are from what most people think. Thank you again for joining us and have a great day.

Key Takeaways

Fourth Industrial Revolution (4IR) technologies like Statutory Pseudonymisation are critical for the fidelity and provenance of information required for data value chains. Once you start using data, it can be used, reused, transferred, etc., effectively ad infinitum. If that data is not properly and lawfully collected, not only is that harmful and problematic for people, but it's also a huge risk to business.

Pseudonymisation has many benefits under the GDPR and similar privacy laws, including support for Article 6(4) processing beyond the original purpose of collection, Article 6(1)(f) Legitimate Interests balancing of interests, Article 32 Security, and others. And, when it is authorised and permissible, you can update and augment original data sources with insights and innovations related to individuals.

Traditional approaches to protecting data are predicated on limiting who has access to data and limiting where information flows. This is inconsistent with the reality of 4IR free flow (or decentralised) data processing. Statutory Pseudonymisation is 4IR technology that protects data when in use no matter where it travels or how it is used, enabling maximum data innovation and protection.

Schrems II Knowledge Hub

RESOURCES TO ACHIEVE LAWFUL BORDERLESS DATA:

Quick Read

In-Depth Resources

News

Pseudonymisation.com

Top 8 Misconceptions

Executive & Board Risk Assessment Framework

New Technology Controls Required

Learn how Pseudonymisation can solve Schrems II challenges Webinar

Memorandum to EDPB

Technical Supplementary Measures Webinar

Legal Solutions Guidebook

Presenting Risk Exposure to the C-Suite & Board Webinar

Anonos Solution Page

Implementation Workshop

IDC Report on Schrems II

LinkedIn Group

Pseudonymisation Blog

Top 8 Misconceptions

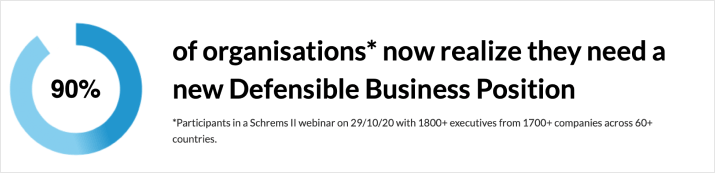

A number of serious misconceptions about the impact of Schrems II still remain, which makes it hard for organisations to comply.

This PDF download contains an explanation of the Top 8 Misconceptions surrounding Schrems II so that your organisation can eliminate misunderstandings to move forward. Downloadable and web versions are available.

READ MOREThis PDF download contains an explanation of the Top 8 Misconceptions surrounding Schrems II so that your organisation can eliminate misunderstandings to move forward. Downloadable and web versions are available.

Schrems II Legal Solutions Guidebook

The Schrems II Legal Solutions Guidebook is a critical asset for legal and privacy advisors working on GDPR and Schrems II compliance issues.

The Guidebook, which has been downloaded over 2,200 times, covers the key legal aspects and benefits of SCC-based Schrems II compliance, as well as a checklist, templates, and practical steps for organisations to follow.

DownloadThe Guidebook, which has been downloaded over 2,200 times, covers the key legal aspects and benefits of SCC-based Schrems II compliance, as well as a checklist, templates, and practical steps for organisations to follow.

Implementation Workshop

Schrems II workshop covering Implementation Roadmap & Legal Benefits, for organisations to understand how to implement Schrems II Supplementary Measures for SCCs. Over 2000 GCs, CPOs, DPOs, and Outside Legal Counsel participated from over 1700 companies across over 50 countries. To ensure you don't miss out on valuable information, a replay of this workshop is available for you.

Watch ReplayLinkedIn Group

This LinkedIn group focuses on GDPR Pseudonymisation as highlighted by the EDPS and the EDPB as the most promising supplemental measure for Schrems II compliance. Also, visit www.pseudonymisation.com for more information on Pseudonymisation.

JOIN GROUPAnonos Solution Page

Anonos offers a technology solution that provides technical Supplementary Measures for Schrems II compliance. Explore Anonos GDPR-Pseudonymisation technology, so that you can support your organisation or clients towards a compliant solution. Only Anonos delivers three critical requirements for achieving a Defensible Business Position: Schrems II compliant Supplementary Measures and GDPR-compliant Pseudonymisation to future-proof Standard Contractual Clauses (SCCs).

VIEW SOLUTIONIDC Report on Schrems II

This IDC report explains how Anonos’ BigPrivacy software is well placed to satisfy the Schrems II requirements for appropriate safeguards by creating pseudonymised versions of personal data (Variant Twins).

The IDC report covers the development of Anonos BigPrivacy, use cases, an explanation of Anonos' state-of-the-art Pseudonymisation technology, and market applicability of the solution. Read this IDC report to find out how Anonos technology can help you.

READ REPORT The IDC report covers the development of Anonos BigPrivacy, use cases, an explanation of Anonos' state-of-the-art Pseudonymisation technology, and market applicability of the solution. Read this IDC report to find out how Anonos technology can help you.

Pseudonymisation Blog

A timely collection of articles and perspectives that you will not find elsewhere. This content reflects topical issues gleaned from meetings and interactions with companies, regulators, legislators, and non-governmental organisations related to SCC-based compliance with Schrems II requirements.

READ BLOGPseudonymisation.com Resource Page

Pseudonymisation is at the core of the Data Embassy principles, and is newly-redefined in the GDPR. Find out more about the importance of Pseudonymisation, as recommended by the EDPB as a Schrems II solution for protecting data in use, and how its application can help your organisation.

READ MOREExecutive & Board Risk Assessment Framework

This framework covers the crucial issues we address when working with these organisations to evaluate the ability to establish an immediately defensible position in compliance with Schrems II.

READ MORENew Technology Controls Required

Relying on “Words Alone” by updating contracts and hoping for treaties produces unsustainable operations because no contract or treaty will remove the need for new technology controls to protect data when in use.

This Briefing covers how Anonos technology solves international cross-border legal challenges, enabling the highest data protection levels, accuracy, and utility on a global scale by complying with recommendations by the EDPB for GDPR-compliant Pseudonymisation.

READ MOREThis Briefing covers how Anonos technology solves international cross-border legal challenges, enabling the highest data protection levels, accuracy, and utility on a global scale by complying with recommendations by the EDPB for GDPR-compliant Pseudonymisation.

Webinar: Presenting Risk Exposure to the C-Suite & Board

Schrems II risk mitigation strategies for Boards of Directors and C-Suite, and organisations are critically needed. View this webinar to find out what the risks are, and what steps you need to take next to brief your executive team and board members.

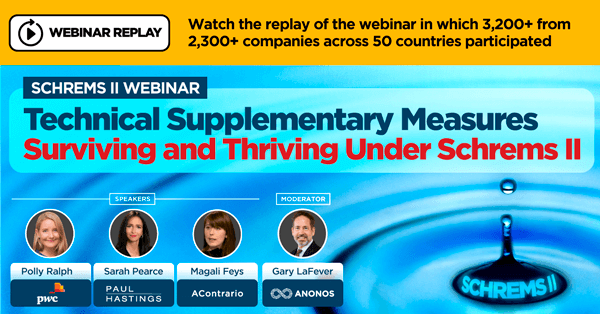

Watch ReplayWebinar: Technical Supplementary Measures

Webinar Replay: Watch the replay of the webinar in which 3,200+ from 2,300+ companies across 50 countries participated. Schrems II Webinar: Technical Supplementary Measures Surviving and Thriving Under Schrems II.

Watch ReplayWebinar: Learn how Pseudonymisation can solve Schrems II challenges

Learn how GDPR Pseudonymisation can help your organisation achieve compliance while reaching business goals and objectives.

WATCH REPLAY

Memorandum to EDPB

Read Pseudonymisation memo submitted to the EDPB in connection with public consultation 05/2021

READ

© 2024 Anonos. All rights reserved. Privacy Policy