The era of the metaverse is dawning with all sorts of issues. How do we ensure privacy, prevent violence and abuse, and leave no man behind?

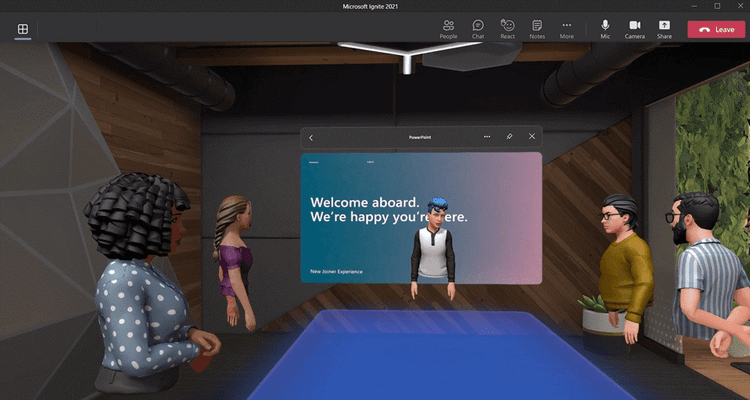

The metaverse might be only a commercial endeavor for many, yet it also comes with a promise to create a better society. But if we build it on old foundations, the metaverse will be the 3D representation of today's internet.

Educated and tech-savvy people might be able to thoroughly enjoy the new metaverse and take full advantage of it while preserving their privacy and human rights, while others would probably be at tech companies' mercy.

"Some people will have the metaverse experience equivalent to dial-up when others are on broadband. Or, there could be people with their early metaverse behaviors following them without the means to reset them. Imagine if our 2010 Twitter / Facebook activity determined our current online experiences? The metaverse could be like that for some," Gary LaFever, CEO of Anonos, said.

He emphasized two different models of the metaverse. In the first scenario, companies are simply moving a centralized model, where companies control the communication and store user data centrally, to the metaverse, merely creating a 3D representation of today's internet or web 2.0. The second metaverse model is a decentralized version built on web 3.0, and no central entity controls the data exchange and storage.

I sat down with LaFever, a lawyer and an expert in lawful borderless data, to discuss whether the metaverse will be any better in terms of privacy and human rights than today's internet.

What will the first metaverses look like?

We believe privacy has to be addressed for the metaverse to be sustainable and successful in the long term. And that's the combination of some centralized aspects and the benefits of a decentralized model. NFTs and blockchains are very powerful, but they do not enable privacy beyond consent.

We think it's very exciting, we believe the first metaverses will be centralized, so they'll be three-dimensional versions of web2.0. Eventually, people will move more and more towards the web3.0 version of the metaverse.

Big tech companies are criticized for their long history of trading data, but you say that the blockchain metaverse projects also do not guarantee your privacy?

When it comes to privacy, there are a lot of benefits to the blockchain. The fundamentals of blockchain if immutability. That's how each block in the chain knows what was before, they can refer to it, they can rely on it, and that's replacing the centralized control. The problem with immutability – it makes for easy reidentification.

If you only have what I refer to as a single-step approach to privacy, where you replace someone's name with or any other identifier with a token again and again and again, many studies show it's literally almost child's play to figure out who somebody is. And so, if that's not addressed by Facebook or anyone else in their metaverse, they will have the benefits of blockchain but also the downside of the blockchain.

Will the lawmakers get there in time? Usually, regulations lag behind technology.

Ironically, I think this is probably the only time, to my knowledge, when it's the reverse. The GDPR has embedded in it a new concept that's statutory pseudonymization. The word has been around for hundreds of years. When you look at pseudonyms, authors use them - a nom-de-plume (a fake name.) People think they know what pseudonymization means, but looking at how it was defined for the first time in EU law, the definition has embedded the ability to protect. Still, technology has been slow to adopt it.

In this instance, the GDPR anticipated some of the stuff. Many say anonymize your data, so you are outside of the GDPR. The reality is that pseudonymized data is still within the GDPR, but it has many statutory benefits. These are not loopholes; these are not workarounds. The GDPR rewards you if you can achieve that heightened level of protection, and it hasn't been something that many companies have focused on. Some have, don't get me wrong. You can fix blockchain, and you can help the metaverse.

Now, I can choose what social networks I use. Will I have the same choice with the metaverse? Or will it be given to me by default, either by my company or friends? Facebook might have emptied a long time ago, but I choose not to leave because I have built social circles there.

I think you will. But you need to take a step back. You need to fix what's broken in web2.0 before expecting it to be better in web3.0 and the metaverse. As you said, Facebook provides a lot of value to many people, interactions, and social engagement. However, again and again, they are criticized and penalized for continuing to process data the way they have. Why? Because while it provides all those benefits, it does it at the cost of privacy and fundamental rights of individuals.

You can't take that to the metaverse and expect that to get fixed. Metaverse players are fixing it as they go to the metaverse. It is very popular to "protect" people's data by replacing their names with a token in the blockchain. But it's the same token used everywhere. And so that's just a simple example that you need to fix before you go to the metaverse. But companies are looking to be participants and active in the metaverse, looking to address that problem. And those same corrections would also help in web2.0.  Are metaverse companies looking to build a better society with less inequality, or are these strictly just business endeavors?

Are metaverse companies looking to build a better society with less inequality, or are these strictly just business endeavors?

For anything to be sustainable, you have to have a balance between rewarding the individuals, giving them opportunities to engage, socialize, and taking corporate benefits. If you look at Facebook, it's been very successful for a long time, but it's encountering problems now. It may take a while, but companies are looking to do what you are saying, and I think they will have a competitive business advantage while also rewarding the participants.

For example, there are several different ways and discussions about whether people should be rewarded for the data they contribute, either financially or with non-currency rewards? You can go into certain places that other people can't. But the reality is, you are acknowledging that the data, the interactions, the perspectives, and the opinions that people are giving provide the ecosystem value. There are some concepts and approaches where people get rewarded for their data and protect the data, and that has to be balanced as well because you get a self-selection bias.

When you talk about ethics and data, it's multifaceted. If you make it so that people can buy privacy, then those that can't afford it will not have privacy. So that's not fair. You've now disadvantaged those who either don't fully understand or don't have the economic means to pay for it. So you need to be careful what you are offering to everyone and what you are offering to special groups because even without the intent, you may be disadvantaging people. Also, there's a lot of processing, a lot of AI that includes detailed information about individuals that's not necessary and may result in unintended bias that we may not know of for years, lifetimes, decades.

So going about this in a structured way where you think, though, what is it I'm trying to provide to the participants of the metaverse? What is it I am trying to get back? There's nothing wrong with getting something back. As long as there's a balance between the two, and in that balancing, you don't perpetuate biases or discrimination. You have to think through that.

You have to fix it now. If you don't, we will have more problems in the metaverse because people will have more opportunities to show different sides of themselves, and it actually could be worse than better. We firmly believe that these issues of ethical data processing, inequality, and discrimination, both intended and unintended, need to be addressed.

Will some people be left out due to technological illiteracy and even the cost of the technology needed? Will the metaverse be intuitive at one point so everyone can be a part of it?

It's our belief and hope that anyone could participate. There's a reason for companies, countries, and regions to try to make that happen in addition to treating people fairly. When you process data, the more representative of the reality the data is, the greater fidelity it has to what's going on, and the greater that the results will have a higher correlation to reality.

Again, it's a self-selection bias. If you are processing only data of a fluent person of a given area, the results you will get will be skewed and won't have widespread applicability. So while there will be a cost if there's some underwriting to provide technology to those in areas where they don't have the infrastructure, even if someone has cash or wealth, if they are not living in an area with the infrastructure, it is difficult to participate. So it's a combination of cost and infrastructure. There is a compelling societal and commercial benefit to getting that infrastructure more widespread and dispersed so that anybody can participate. People act more rapidly when there's an economic benefit to them.

Is there an agreement in the industry on what needs to be fixed in web 2.0?

The metaverse has to comply with all laws because you don't know where someone in the metaverse is from. They are still physically in their location, and they have certain rights. The fact that they have gone into this virtual sphere doesn't remove those rights. You can provide a balance so that the law applied in the metaverse is at the standard that satisfies all jurisdictions. In the same way, you can provide a baseline that's not a compromise. If you are willing to pay to be rewarded for your data and someone else is not, there's a level at which the two of you can interact, which is still of great value, and that is the baseline.

Rather than expecting the industry to come up with one standard, the standard should always treat everyone the same at the same level and then allow them to decide that they want to be treated differently.

This article originally appeared in cybernews. All trademarks are the property of their respective owners. All rights reserved by the respective owners.

CLICK TO VIEW CURRENT NEWS